Washington — Self-driving car advocates are scrambling to contain the fallout of the fatal crash of 2015 Tesla Model S that was operating with its automated driving system activated.

The news of the fatality — believed to be the first death in a car engaged in a semi-autonomous driving feature — came as federal regulators prepare to unveil regulations for testing of fully automated cars this summer. Their preparations are being closely watched by supporters and critics of the self-driving technology.

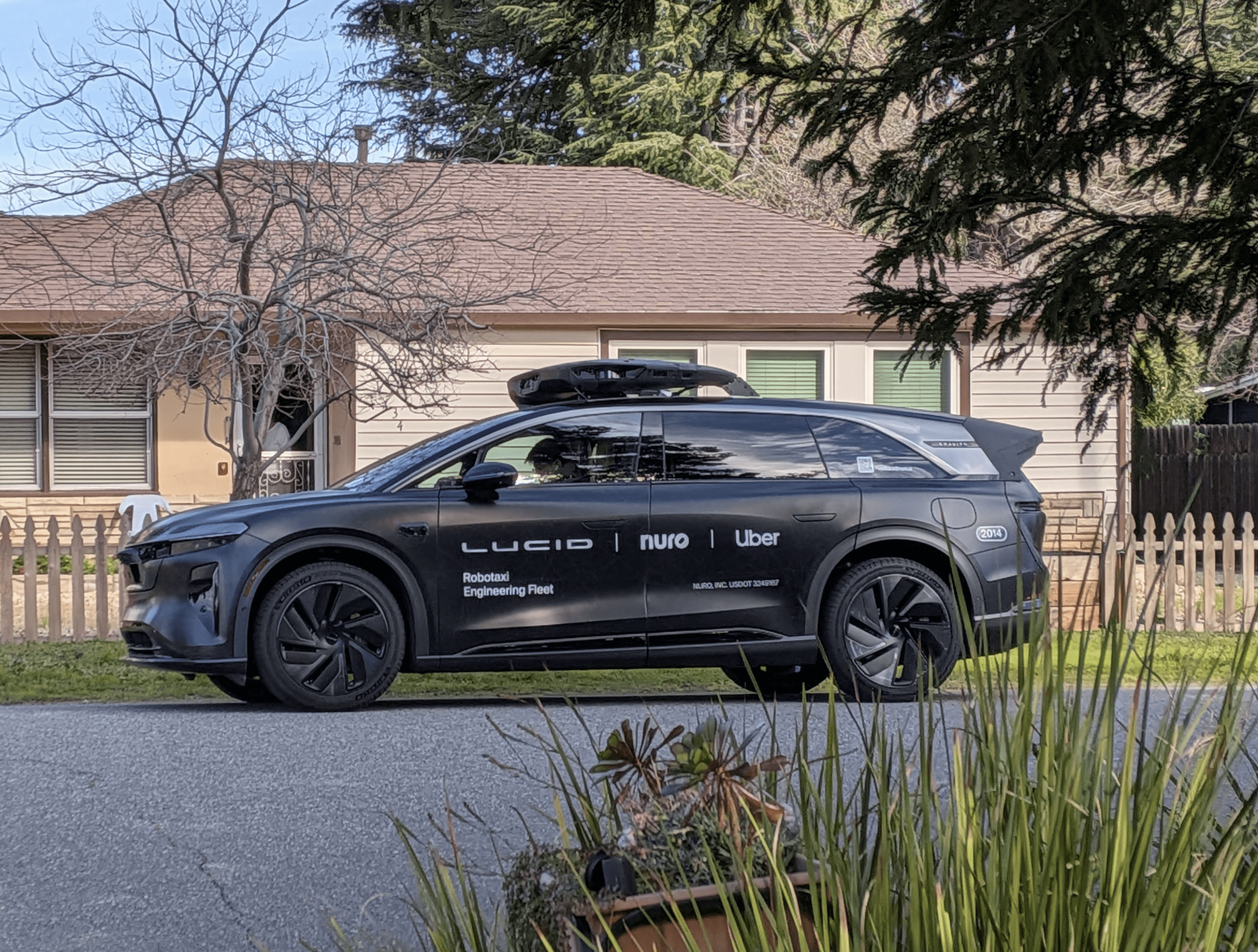

David Strickland is a former National Highway Transportation Safety Administration chief who is lobbying for self-driving cars on behalf of Ford, Volvo, Google, Uber and Lyft. He said Tesla’s Autopilot system “is not a self-driving system.”

“It’s an enhanced driver-assist,” Strickland said, noting that the lobby group he represents — Self-Driving Coalition for Safer Streets — is pushing for fully autonomous cars. “For us, the notion is full self-driving where you have no expectation of the driver being pulled back in to driving,” he said.

Federal regulators say preliminary reports show the Tesla crash happened when a semi-trailer rig turned left in front of the car that was in Autopilot mode at a highway intersection. Florida police said the roof of the car struck the underside of the trailer and the car passed beneath. The driver was dead at the scene.

“Neither Autopilot nor the driver noticed the white side of the tractor-trailer against a brightly lit sky, so the brake was not applied,” Tesla said in a blog posting June 30.

Strickland said self-driving technology holds too much promise to discard because of the fatal accident. “Every company in our coalition is still working very hard to test and test safely because we’re operating toward a goal of full self-driving.”

Michael Harley, senior analyst for Kelley Blue Book, said the fatal crash probably will set back development of self-driving cars.

“Unfortunately the biggest issue we have right now is public perception,” he said. “The public is pretty used to seeing a couple of thousand people every day die on roads, but you get one report of automated vehicle fatality and it’s headlines everywhere.”

Still, Harley said “long-term, autonomous vehicles are the way to go.”

“It’s probably going to drop the death rate on U.S. highways by 80 to 90 percent alone, just taking away the humans [from driving],” he said. “Humans are the ones making the errors.”

Harley said Tesla’s cars are not self-driving, even though it has heavily promoted its Autopilot feature. “Right now on the scale of autonomous vehicles, we’re at a one or two,” he said. “We’re at the driver-assistance level.”

Ron Montoya, consumer advice editor for Edmunds.com, believes the Tesla crash will cause regulators to become more cautious.

“One of the things people might want to lean toward is something like California, where the driver has to be in the car at all times,” he said, referring to proposed regulations in California that would require a licensed driver — and a steering wheel — to be in the car at all times.

Montoya said requiring licensed drivers to be at the wheel “kind of goes against the point of self-driving.” But he said “there is probably going to be a greater emphasis on holding the driver responsible for paying attention.

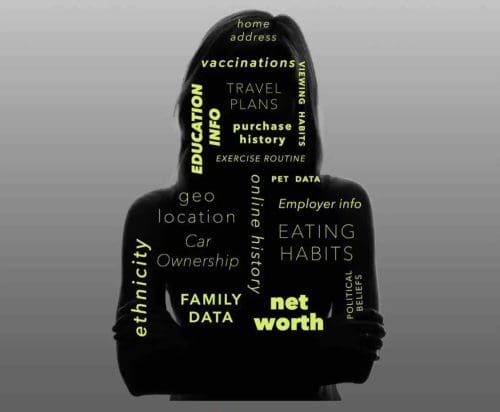

“There is concern that when we reduce the task of driving, we find other things to do,” he said. “The challenge is how do we re-engage the driver? If the vehicle is capable of doing most of the task of driving, what do with that downtime and how do we adjust when the system asks us to take control?”

Tesla has said it disables Autopilot by default and requires explicit acknowledgment that the system is new technology and still in a public beta phase before it can be enabled.

“When drivers activate Autopilot, the acknowledgment box explains, among other things, that Autopilot ‘is an assist feature that requires you to keep your hands on the steering wheel at all times,’ and that ‘you need to maintain control and responsibility for your vehicle’ while using it,” the company wrote in a blog post when news of the fatal accident was released June 30.

“As more real-world miles accumulate and the software logic accounts for increasingly rare events, the probability of injury will keep decreasing,” the company continued. “Autopilot is getting better all the time, but it is not perfect and still requires the driver to remain alert.”

Critics have charged that Tesla is responsible for giving drivers the idea that its Autopilot is more advanced than it really is.

“We really felt that Tesla is rushing out there putting something out that’s not safe,” John Simpson, privacy project director at the Santa Monica, California-based Consumer Watchdog group, said in a telephone interview. “If you can’t tell the difference between a white truck and the white sky, that’s not very safe.”

Simpson said the Tesla crash should “give everybody pause about the actual state of the technology” behind self-driving cars.

“Tesla’s fundamental problem is that they’re out there in beta mode,” he said. “They’re testing something and they’re relying on their customers to be their guinea pigs. They’re in that kind of Silicon Valley mindset where you put it out there and get all the feedback, but that doesn’t work here.

Simpson said driver-assist features that are not fully autonomous can be used to improve safety, as long as drivers aren’t led to believe they do not have to oversee a car’s operation.

“There are clearly elements of self-driving technology that aren’t totally autonomous that if installed in vehicles can work to augment safety. But people have to understand them,” he said.

(202) 662-8735

Twitter: @Keith_Laing