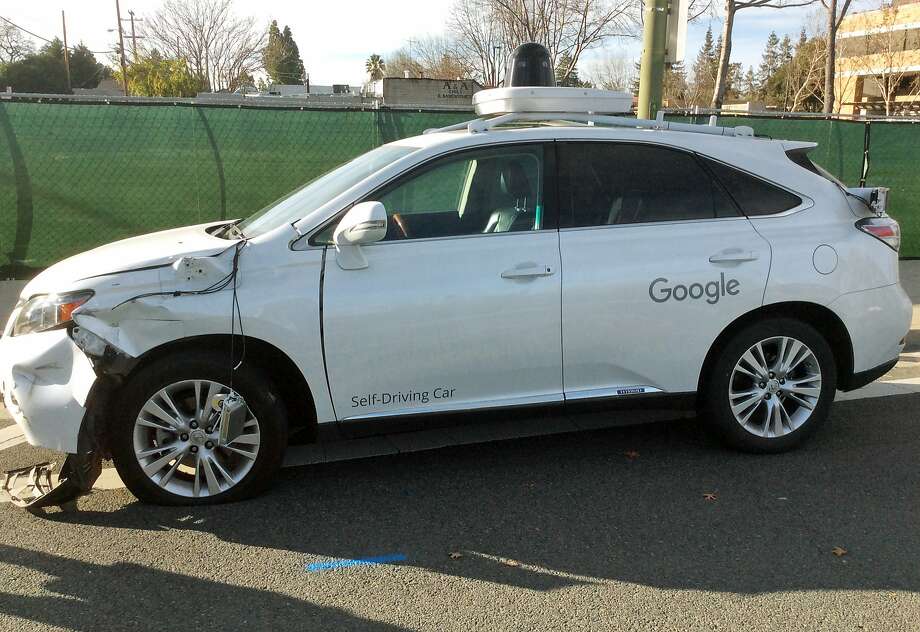

As automakers race to create cars that can drive themselves, a growing chorus of consumer advocates is demanding that the companies — and their federal regulators — slow down.

The fatal crash of a Tesla Motors Model S operating in its self-steering Autopilot mode has thrown the issue into sharp relief.

Consumer Reports on Thursday called on Tesla to disable the Autopilot software until it can require drivers to pay stricter attention. The influential publication also urged Tesla to change the system’s name, arguing that “Autopilot” can lull drivers into a false sense of security.

On Friday, Rep. John Thune — chairman of the House Committee on Commerce, Science and Transportation — sent CEO Elon Musk a letter asking that Tesla brief the committee staff on details of the Model S accident and the workings of the Autopilot technology by the end of the month.

A group of consumer advocates, including a former head of the National Highway Traffic Safety Administration, sent President Obama a letter Wednesday, accusing the federal government of “undue haste” in pushing autonomous vehicle technology. Rather than drafting enforceable regulations, the Department of Transportation is expected to introduce “guidelines” this month for the technology’s development, testing and rollout.

“Guidance is meaningless and toothless,” said John Simpson with Consumer Watchdog, one of the organizations that drafted the letter. “When you’re talking about potentially life-threatening technologies, you need real standards that can be enforced.”

Simpson and some of the technology’s other critics agree that self-driving cars could one day prove beneficial — after they’ve been proved safe. They could, for example, greatly expand the mobility of disabled people or the elderly.

“The problem is that dream, that vision, may be decades away,” Simpson said. “And the companies trying to develop that vision are rushing it.”

Tesla’s Autopilot system, which was introduced last year, helps cars stay in their lanes. It is also supposed to react to cars around it, so if someone cuts in front, the car slows down. Other companies also offer “lane-assist” systems that help steer on the highway.

But the public rollout of fully autonomous cars remains years away. In the meantime, the automakers don’t want to rush regulations.

Glen DeVos, vice president of engineering and services with Delphi Automotive, said regulations should be based on more experience and data than car companies and government regulators currently have.

“I don’t expect (federal regulators) to have all the answers now,” DeVos said. “What’s important is to have a thoughtful process for this.”

Delphi, one of the world’s largest auto parts suppliers, last year sent a self-driving Audi on a cross-country road trip from San Francisco to New York. In advance, the company approached officials in the states along the route and found a patchwork of regulations that could apply to a car steering itself.

“The biggest thing, from our point of view, is to avoid the proliferation of different rules in state after state,” DeVos said.

The federal government has actively encouraged the development of autonomous cars, by traditional automakers as well as Tesla and Alphabet, the parent company of Google. Transportation department officials see in them the potential to save many if not most of the people who die on the road each year — with last year’s death toll topping 35,000.

“In the United States, we lose the equivalent of a fully loaded 747 crashing every single week due to roadway fatalities,” said Mark Rosekind, administrator of the National Highway Traffic Safety Administration, during an April meeting at Stanford University on self-driving cars. “These numbers are exactly the reason we are here today.”

The Department of Transportation, he said, was “leaning forward on automated vehicles” and developing guidelines for their safe introduction. With the technology already being tested on public streets and roads, he said, the department needed to move quickly.

Simpson and other critics, however, argue that federal officials are giving automakers too much leeway, and they point to the Tesla crash as proof.

In the May 7 crash, which the company first discussed publicly on June 30, a Model S on Autopilot smashed into a truck crossing the highway in front of it. According to the company, the system did not distinguish between the truck’s side, painted white, and the bright sky behind it. Neither the car nor the driver — Joshua Brown of Canton, Ohio — hit the brakes.

“‘Autopilot’ technology that cannot sense a white truck in its path, and that fails to brake when a collision is imminent, has no place on the public roads,” read the letter that Consumer Watchdog sent to Obama. The letter was also signed by the Center for Auto Safety, Consumers for Auto Reliability and Safety, and Joan Claybrook, head of the highway safety agency during the Carter administration.

Tesla describes Autopilot as still being in its testing phase. Before the company activates the Autopilot software in a car, the driver must acknowledge that he or she is responsible for maintaining control of the vehicle.

Tesla rejects the notion that the system was not adequately tested before its public introduction. Brown’s death was the first fatality in 130 million miles of Autopilot driving racked up by Tesla’s electric cars, according to the company. Tesla says the U.S. average is one fatality per 94 million miles.

“We do not and will not release any features or capabilities that have not been robustly validated in-house and will continue to leverage the enormous amount of insight this process provides to advance the state of the art in advanced driver assistance systems,” a Tesla spokeswoman said in an email.

David R. Baker is a San Francisco Chronicle staff writer. Email: [email protected] Twitter: @DavidBakerSF