Op-Ed Commentary by Joan Claybrook and Jamie Court Joan Claybook is a former administrator of the National Highway Traffic Safety Administration. Jamie Court is president of Consumer Watchdog.

Aman died because his self-driving car couldn’t drive. His Tesla Model S, running on Autopilot, crashed into a white semi in Florida, mistaking it for bright sky. This makes Joshua Brown, 40, the first casualty of both a robot car’s failures and automakers’ and regulators’ failure to take its hazards seriously.

Far from offering public warnings, Tesla and Obama administration transportation safety officials hid Brown’s death from the public, investors and other government officials for nearly two months. In the wake of the disclosure, reports emerged about other autopilot failures, but neither the carmaker nor federal authorities has yet recalled the defective Autopilot.

Tesla argues that its technology is sound and that drivers should have their hands on the wheel, ready for problems. But an Autopilot that cannot distinguish a white truck from a white sky is not ready for the road. It’s shocking that federal safety regulators did not require testing the system to prevent Tesla’s occupants from being human guinea pigs.

The episode highlights how the hype over robot cars has proved an opioid for Obama administration officials, who are racing to issue new robot car “guidelines,” not mandatory safety regulations subject to public review, before the president’s term is up. This acceleration could come this week when Transportation Secretary Anthony Foxx and National Highway Traffic Safety Administration chief Mark Rosekind are in San Francisco, where they are expected to announce fasttrack guidelines at a robot-car symposium.

Foxx and Rosekind have demonstrated a pattern of private decision-making with robot carmakers that has excluded the public and puts us at risk. They recently announced a private, voluntary agreement with 20 automakers for installation of semiautonomous Automatic Emergency Braking that, despite citizen pleas, bypassed a transparent rule-making process with public participation. This unusual circumvention of public process was followed with hearings on robot car “guidelines” but without any draft “guidelines” about which to comment, once again excluding effective participation. The NHTSA earlier had issued a legal “interpretation” that computers are equivalent to drivers without any public notice or comments solicited or coordination with state officials who might disagree.

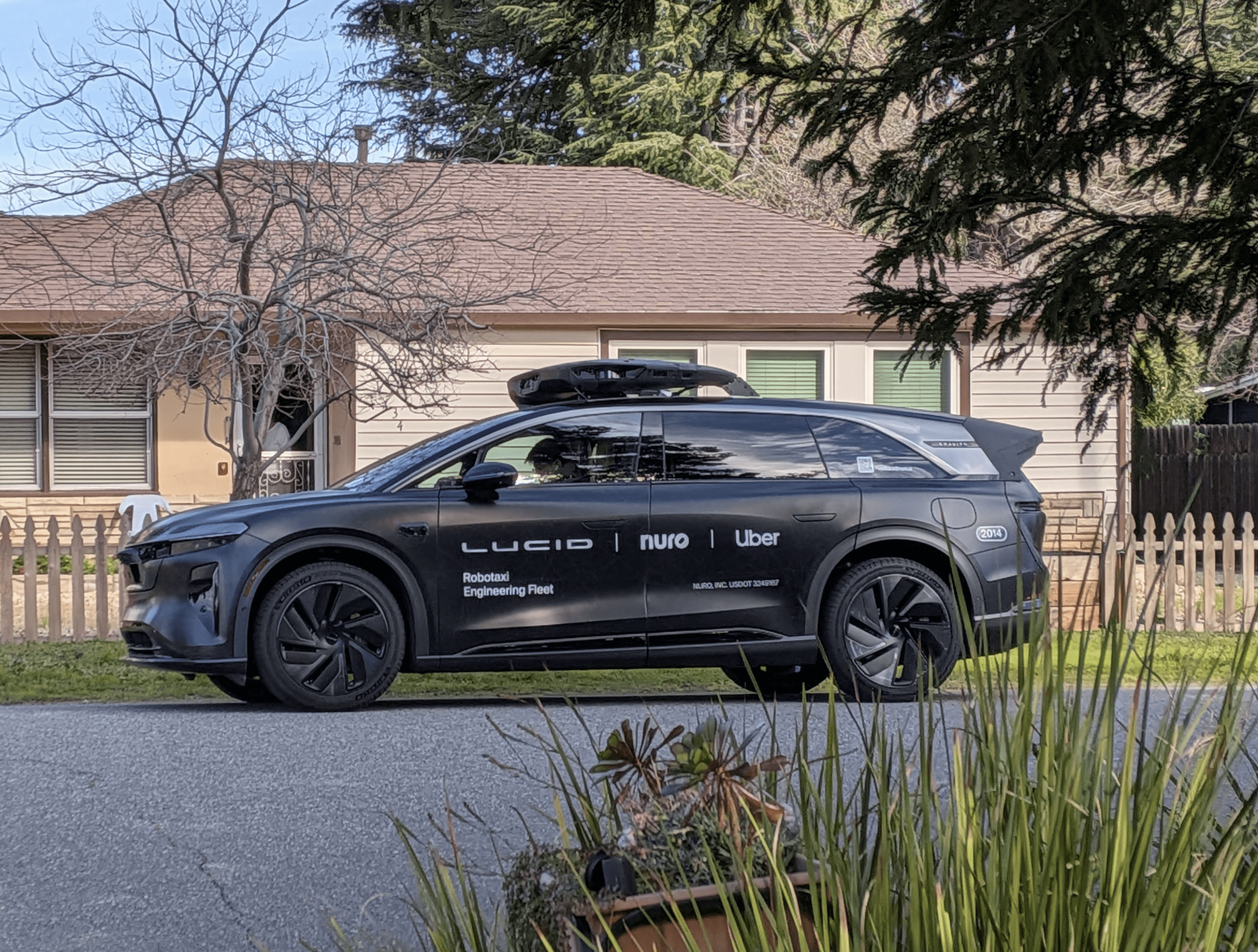

Testing in California proves robot cars are not ready for the road without enforceable rules and substantial oversight. Reports filed with the California Department of Motor Vehicles by Google show its self-driving technology failed 341 times in 424,000 miles driven, forcing human drivers to take over.

Google’s cars were often not capable of “seeing” pedestrians, cyclists and low-hanging branches, or dealing with emergency vehicles, potholes or bad weather, let alone the human-driven cars all around them. Nonetheless, Google insists on building its first generation of robot cars without steering wheels, brakes or accelerators that allow human drivers to take back control of the vehicle. California regulators have not endorsed Google’s plan, so Google, a major Obama supporter, has turned to the feds.

The only safe road forward is for a sober vision of robot-car development that does not put the rush to newer technology above the values of safety, transparency and accountability.

President Obama should withhold any industry “guidance” without a public rulemaking process. Carmakers like Tesla must stop blaming victims and take legal responsibility for Autopilot failures. Volvo and Mercedes have pledged to do that. All other robot carmakers should follow.

This week, San Francisco can pave the way for robot car safety or rush an imperfect technology that could cause mayhem.