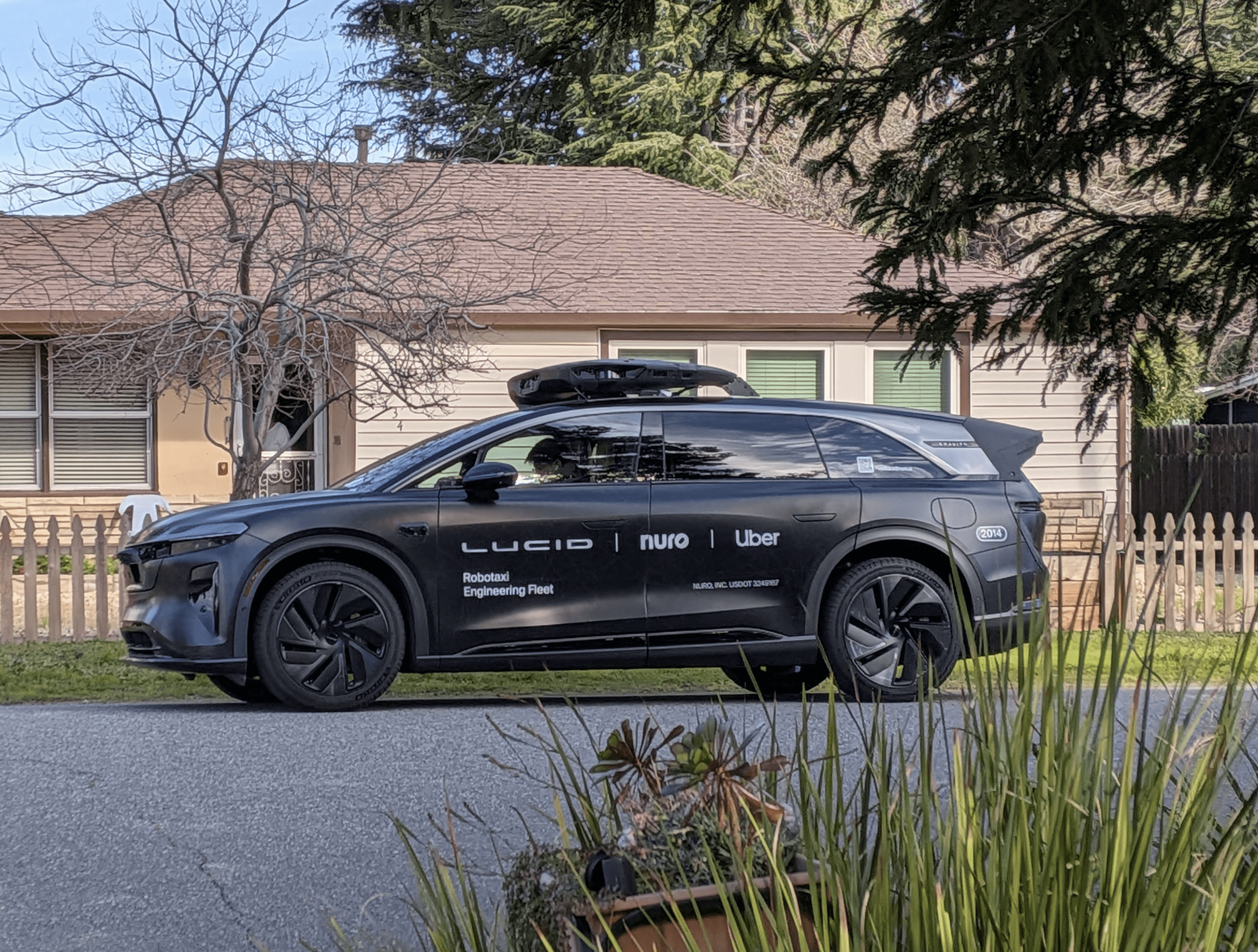

If there's one priority for self-driving cars upon which everyone can agree, it's safety. Google and other manufacturers are quick to tout the autonomous vehicles as a way to prevent around 33,000 U.S. deaths and many more injuries from auto-related crashes each year. But for all the triumphs of autonomous cars, regulators and consumer advocates warn there are too many risks to give these vehicles free rein on public roads.

This debate over an emerging technology spilled onto the public stage last week as officials with the California Department of Motor Vehicles convened their first workshop on proposed rules for the everyday operation of autonomous vehicles.

As ordered by state lawmakers, DMV regulators in December released their long-awaited first draft of rules, which were widely seen as throwing a wet blanket on hot technology. The proposed rules emphasize caution, mandating that any self-driving cars must have a licensed driver behind the wheel ready to take control in case the car's autopilot fails.

To hear some explain it at the workshop in Sacramento on Jan. 28, that stipulation hobbles the technology's most compelling achievement. A large number of speakers who represent the disabled and elderly blasted the rules for holding back what a new means of independent transit for those who can't drive. Since it is impossible for many with disabilities to get a driver's license, the DMV's proposed rules are tantamount to excluding those riders, said Teresa Favuzzi, executive director of the California Foundation for Independent Living Centers.

"We look at the potential of a fully autonomous self-driving car as nothing short of revolutionary for the disability community," she said. "Hold manufacturers to safety requirements, but don't (keep) us out of this revolution."

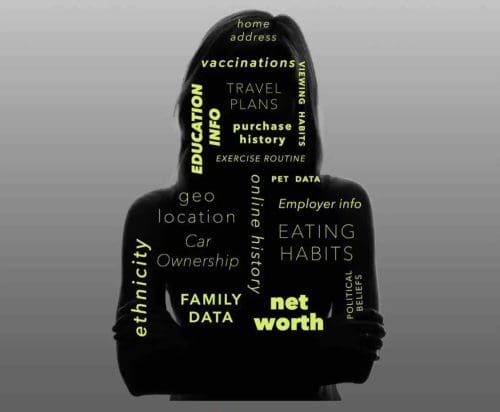

Explaining their rationale, DMV officials noted that it was premature to allow computer-guided cars free rein on public streets, but the agency hinted that those rules could be loosened down the road. Officials also set standards for cyber-security, making it mandatory for cars to alert drivers about software breaches and to inform riders of any private data being culled from their vehicles. Any cars being rolled out for the consumer market will be required to undergo testing and certification from an independent testing organization.

By all accounts, getting a self-driving car to the consumer market is still years away, but the aspirations for the technology continue to mount. Along with accident rates dropping, enthusiasts believe the cars could solve some of the worst problems of auto culture. With the cars in control, the daily commute could provide time for riders to relax, catch some extra sleep or get started on the workday. In the long term, experts anticipate the technology will herald more automation and machine-to-machine communication in a wide variety of other consumer applications.

But the scope of this technology presents some major questions. John Simpson, an advocate with Consumer Watchdog, warned that cybersecurity, data collection and a host of other areas need regulation that manufacturers shouldn't be allowed to dictate. He urged the DMV officials to distinguish what safety data should be necessary to glean from cars, and put limits on with whom car makers share that information.

Unlike many of the other speakers, Simpson championed the DMV's requirement for licensed drivers. He pointed out that many self-driving cars currently being tested were routinely being "disengaged" to manual control, indicating self-driving cars still need the proverbial training wheels. For the first time in December, the DMV began requiring testing companies to disclose these disengagements. Over a period of 15 months, Google reported disengaging 341 times, of which 13 could have resulted in an autopilot accident, Simpson said.

"Despite what Google says about being deeply disappointed, you have the responsibility for safety for California drivers and other people, and you got it exactly right here," Simpson said. "I want to commend the DMV for putting safety first."

Speaking for Google's self-driving project, lead engineer Chris Urmson pointed out the company is rapidly improving the performance of its fleet — currently at 45 cars in Mountain View. Some of the close-call incidents requiring manual override were little more than the car almost bumping a traffic cone, he said. Ongoing testing will bring the cars to the point they can reliably function as safe drivers.

"We recognize we have a lot of work to do, but the DMV has to act to allow this technology into the marketplace," he said. "We have to avoid the presumption that having a person behind the wheel will make this more safe."

Earlier this week, Google officials announced they would also continue testing their self-driving cars in Kirkland, Wash. in addition to Mountain View and Austin, Texas.

As the discussion continued over five hours, DMV officials urged the stakeholders in attendance to cite specific areas of ambiguity in their draft regulations. Brian Soublet, an attorney representing the DMV, thanked speakers in turn, but he declined to signal what, if any, changes his team would consider.

"The goal at the end of the day is to have regulations that can get approved, and we can get into business of having these cars on the streets," Soublet said.

DMV officials could not say a final set of regulations would be completed.