First Death Brings Unanswered Questions, Rattles the Self-Driving Future

By Pete Bigelow, CAR AND DRIVER

March 20, 2018

Long before an Arizona woman stepped off a curb and into transportation history under cover of darkness Sunday night, some circumstances surrounding her death in a collision with an Uber self-driving test vehicle had already been determined.

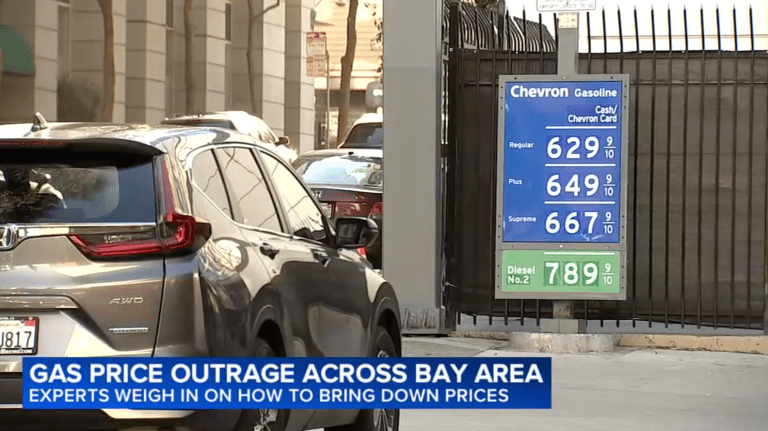

As in so many other places in the Sun Belt, traffic engineers had built this road in Tempe with cars foremost in mind. Mill Avenue stretches five full lanes across at the point where she attempted to cross: two for vehicles turning left at the upcoming intersection with Curry Road, two for vehicles traveling straight, and a right-turn lane at the far side. Wide roadways like this are common sights in Arizona, a state with the fourth-highest rate of pedestrian fatalities in the country.

Paths crisscross a shrub-covered island that separates the northbound lanes of Mill Avenue from the southbound ones. A sign deters pedestrians from crossing there, instead instructing them to use the crosswalk at the intersection ahead. But depending on exactly where Elaine Herzberg attempted to cross—and police haven’t released precise information on the point of impact yet—that crosswalk was at least several dozen feet away, and perhaps several hundred.

When she stepped off the curb, pushing her bicycle, she entered a road environment where the state’s governor had eagerly welcomed self-driving vehicles and the companies testing this fledgling technology. In December 2016, when Uber ran afoul of California regulations that mandated the company hold a permit for testing, Gov. Doug Ducey wooed the company, saying, “This is about economic development, but it’s also about changing the way we live and work. Arizona is proud to be open for business.”

“This tragedy makes clear that autonomous-vehicle technology has a long way to go before it is truly safe

for all who share America’s roads.”

– Sen. Richard Blumenthal (D-Conn.)

Only 18 days before Herzberg stepped in front of one of those same Uber vehicles, Ducey affirmed that commitment, signing an executive order on March 1 that made it clear that vehicles operating without a human safety driver behind the wheel were expressly permitted on these roads, an extension of what a written statement from the governor’s office indicated was a need “to eliminate unnecessary regulations and hurdles to the new technology.”

The regulatory environment and infrastructure design certainly appear to have contributed to the first traffic death involving a fully autonomous vehicle in United States history. The crash occurred at approximately 10 p.m. Sunday night, when the self-driving Uber SUV struck Herzberg as she crossed from west to east in Mill Avenue. But they do not explain why it happened. One night later, there were more questions than answers.

Among them:

- Why did the self-driving system aboard this Uber, a rejiggered Volvo XC90, not detect and respond to Herzberg entering the road? While it investigates the answer, Uber has suspended all its self-driving-vehicle operations in San Francisco, Tempe, Pittsburgh, and Toronto.

- Most companies developing this fledgling technology have tested their self-driving systems against tens of thousands of scenarios in both simulation and real-world testing. Those include encounters with pedestrians and bicyclists, but did Uber’s include a pedestrian pushing a bicycle? How did the company’s algorithms interpret that?

- Although Arizona permits fully driverless vehicles, the Uber involved in Sunday’s crash had a safety driver, a de facto human fail-safe for the machine, behind the wheel. Why did that driver, Rafael Vasquez, not react in time to avoid or minimize the impact? Tempe police say their preliminary investigation “did not show significant signs of the vehicle slowing down.”

Uber acknowledged Monday that the vehicle was operating in autonomous mode at the time of the crash, which occurred as the car traveled 40 mph in a 45-mph zone. The company said it is cooperating with investigators and expressed its condolences to the friends and family of the victim.

Video footage will help provide further answers. Uber equipped the vehicle with front-facing cameras that show the road ahead and inward-facing cameras that show the safety driver. Sgt. Ronald Elcock of the Tempe Police Department said Monday he had viewed the footage, and although he declined to reveal specifics, he said, “It will definitely assist in the investigation, without a doubt.”

NTSB Again in Leadership Role

Further answers will come from the National Transportation Safety Board, which sent a team of four investigators to Tempe on Monday to begin probing the vehicle’s interaction with the environment, other vehicles, and vulnerable road users. This marks the third time the NTSB, an independent federal agency, has embarked on an investigation involving automated-vehicle technology.

There is an ongoing investigation of a fender bender in Las Vegas involving a human-driven delivery truck and an autonomous shuttle operated by Navya. Previously, the NTSB investigated a May 2016 crash in Williston, Florida, that claimed the life of Joshua Brown, a 40-year-old Ohio resident who had the Autopilot feature in his Tesla Model S engaged at the time he crashed into the side of a tractor-trailer that crossed the two-lane highway on which he was traveling.

Although the Tesla system is called Autopilot, there’s a potentially life-and-death distinction between the way the Tesla feature is intended for use and the way Uber’s self-driving system is designed. The Tesla Autopilot feature is a semi-autonomous technology; it requires a human driver who is responsible for vehicle operations at all times. In Uber’s case, the system is responsible for all vehicle actions—or, in the Tempe case, inactions.

If there’s a similarity between the two cases that had fatalities, it’s that both involved humans behind the wheel of a vehicle who ultimately did not react in time to prevent a death. Last fall, the NTSB issued recommendations stemming from the Tesla crash, among them one urging manufacturers to develop ways to more effectively sense a driver’s level of engagement and alert them when their engagement in the driving task is lacking.

Establishing the nature of interactions between man and machine, and perhaps the complacent faith the former holds in the latter, may be a central part in determining what happened in Tempe.

Federal Legislation May Be in Flux

At the very least, the NTSB’s involvement again shows the leadership role the independent safety agency can play at a time when the Trump administration and the Department of Transportation have unveiled a federal automated-vehicle policy that requires nothing from companies testing self-driving technology. DOT Secretary Elaine Chao has suggested that automakers and tech companies like Uber submit voluntary assessments of their safety credentials, but the revised policy she unveiled in September makes it clear that “no compliance requirement or enforcement mechanism exists” and that it should be “clear that assessments are not subject to federal approval.”

From elsewhere in the federal government, Sunday night’s death may provoke a sharper response.

If the first death attributed to a fully self-driving system was an eventuality the broader industry knew, with some resignation, would one day come, it was an awakening for representatives in Congress who have been mulling legislation, now in the Senate, called the AV START [American Vision for Safer Transportation through Advancement of Revolutionary Technologies] Act. Many safety advocates believe the legislation does nothing more than provide further shelter for an emboldened industry to do as it pleases.

“Crashes like this one are precisely what we have been worried about and are the reason why we have repeatedly called on Congress to make crucial safety improvements.”

– Cathy Chase, Advocates for Highway and Auto Safety

“This tragedy makes clear that autonomous vehicle technology has a long way to go before it is truly safe for all who share America’s roads,” Sen. Richard Blumenthal (D-Conn.) said Monday after learning of the crash. “Congress must strengthen the AV START Act with safeguards to prevent future fatalities. In our haste to enable innovation, we cannot forget basic safety.”

Cathy Chase, president of Advocates for Highway and Auto Safety and a frequent critic of the legislation, said: “Crashes like this one are precisely what we have been worried about and are the reason why we have repeatedly called on Congress to make crucial safety improvements to legislation that would open the floodgates for millions of driverless cars.” The bill, she said, “is a threat to public safety. Road users should not be forced to serve as crash-test dummies.” Consumer Watchdog, a California nonprofit that has closely tracked autonomous-testing developments and the regulatory environment surrounding them, has called for a national moratorium on testing in the wake of Sunday’s fatal crash.

Of course, autonomous vehicles, in profound ways, are supposed to save lives rather than claim them. A former administrator with the National Highway Traffic Safety Administration said autonomous vehicles could halve the number of deaths that occur on U.S. roads, and a study released by Intel last summer suggested automated vehicles could one day reduce the annual death toll from 40,000 to 40

While Herzberg’s death has stoked needed skepticism about automated technology, it’s important to remember that 37,461 people were killed on U.S. roads in 2016, the latest full year for which statistics are available, and that included 5987 pedestrians. Those numbers have been climbing higher in recent years despite advances in crash-avoidance and protection systems.

In calibrating their responses in the aftermath of the first death, everyone from industry executives to regulators to safety advocates should be reminded that an average of 102 people die every day on American roads. The current state of affairs brings an unacceptable level of carnage. Yet for all the promise automated vehicles hold for tomorrow, nobody has proved the technology is demonstrably safer today.