Los Angeles, CA—As California begins to finalize a groundbreaking privacy law this week, Consumer Watchdog is calling on the agency drafting regulations to tighten rules so users can take back their private data.

Last-minute changes to the rules include getting rid of the requirement to identify third-party recipients of data and allowing businesses to forgo displaying the privacy choices made by consumers.

Our personal data is sold hundreds of times a day and worth hundreds of billions of dollars, but if regulations for a first-in-the-nation privacy law are drawn correctly, consumers will get unprecedented control over their personal information beginning in just a few months.

“The Privacy Commission needs to get these rules right and these last-minute proposals would weaken otherwise tough rules in favor of California privacy rights,” said Justin Kloczko, a tech and privacy advocate for Consumer Watchdog, who authored the new report Privacy Dawn. “Your personal data is what determines what kind of loan or job you get, or ads you see. It’s the duty of the Privacy Commission to protect our rights fully.”

In a new report, Consumer Watchdog analyzed draft regulations by the body tasked with enforcing the law, the California Privacy Protection Agency. The regulations take effect January 1, 2023. The commission will convene to finalize regulations on Oct. 28-29. The threat of federal preemption is also examined, as the proposed American Data Privacy and Protection Act would take away California’s stronger privacy rights.

Read the report here.

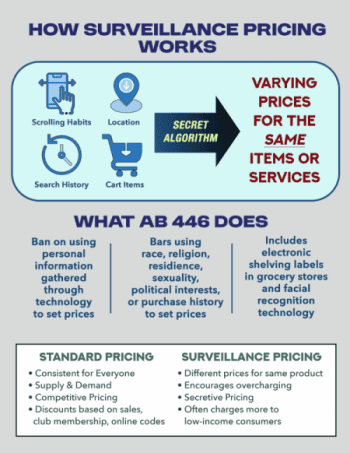

Just from browsing the Internet the average U.S resident has their data auctioned off 750 times a day. That’s double the amount of Europeans and more lucrative than Amazon sales. Facebook alone makes up to a $1,000 annually per person selling personal information. Experts say these auctions occur in nanoseconds, fueling a half a trillion-dollar digital ad market. The more specific personal data is, the more money an entity is willing to pay for it.

But new privacy regulations because of the voter-approved California Privacy Rights Act of 2020 are being finalized now, and they give people the option to opt-out of data selling and sharing with third parties, providing a crucial shield from personal information getting into the wrong hands.

Preemption will supersede the progress of states like California who wish to improve on the laws put in place federally.

“As people come to understand that data has become a valuable extension of themselves, they can now take back what is theirs,” said Kloczko.

Here are how the regulations can be improved:

• Making it Easy to Opt-Out: The proposed regulation says a business may provide the consumer with an option “to provide additional information” to facilitate the consumer’s request to opt- out of sale/sharing. The language could be interpreted as allowing companies to ask for a name and email frequently when someone opts out. Unnecessary hurdles in the opt-out process goes against the intent of the law. Consumers are likely to get fatigued if constantly asked to confirm their privacy choices.

• Immediately Honor Opt-Out of Sale/Sharing: Under the regulations, businesses have 15 days to honor a person’s request to stop selling or sharing data with third parties, as well as 15 days to limit use and disclosure of sensitive personal information. This is a massive window that threatens to upend the intent of the entire law. Even when someone opts out, personal information will still be sold because businesses are granted a two-week grace period. Businesses should be forced to honor a person’s opt-out request just as soon as they are able to sell your data, which privacy experts say is mere seconds. This gap should be eliminated.

• Display Privacy Choices: The board should not delete the requirement stating that a consumer’s opt-out choice must be displayed. Under the board’s proposed Oct. 17 change, a business will not be required to display on its website whether it has processed a consumer’s choice to opt out of sale/sharing personal information, leaving people in the dark about whether they have exercised their privacy rights.

• Identify Third Parties: The privacy board proposed on Oct. 17 to delete the requirement that businesses identify third parties who collect personal information within a notice of collection. Users need to know who will have their information in order to exercise their privacy rights.

The report also highlights the most important regulations:

• The ability to opt-out of data being shared with third parties. Some companies argued that 2018’s CCPA only prevented the ‘sale’ of data, not the data sharing that fuels the business model of many social media and advertising companies.

• Businesses must display on their websites a “Do Not Share/Sell My Information” button and a new “Limit the Use of My Sensitive Personal Information” button on their home page. Consumers will now be able to prevent the use of sensitive data by first parties, including: health, race and ethnicity, precise location, sexual preference, union membership, and religious beliefs.

• More transparency: businesses also must provide a list of categories of sensitive information collected, whether personal information is sold or shared, and the length of time the business intends to retain each category of personal information.

• People can also use a single opt-out signal that every website and company must honor. This enables people to notify websites of their privacy choices instead of individually opting out on each website.

• Opt outs must be frictionless, meaning they can’t use deceiving language or pop ups to fatigue users. “Dark Patterns,” or the deceiving ways in which businesses convince users to give up their privacy, are banned.

• The right to delete or correct inaccurate personal information a business has compiled, and to notify third parties of requested changes. CPRA also expands deletion requests by mandating businesses notify third parties who have the data.

• Data use needs to be proportionate to the purpose. A company can’t use data for a reason that’s completely unrelated to the reason the consumer provided it. For example, a flashlight app cannot use your geolocation for it to function.

• Real accountability: The CPPA can perform announced or unannounced audits of entities to check for CPPA non-compliance.

– 30 –