Washington — A spate of crashes involving Tesla cars equipped with Autopilot driver-assist software has emboldened consumer groups to push federal regulators to pump the brakes on rules governing self-driving vehicles.

The safety advocates say the fatal accident involving a 2015 Tesla Model S with Autopilot engaged that occurred in May has cast doubt on the viability of self-driving cars among potential consumers.

“The public was unaware how unregulated these vehicles were and how Tesla was using the public as test pigs,” Clarence Ditlow, who is executive director of the Washington, D.C.-based Center for Auto Safety, said in a telephone interview Monday. “The American public expects safe vehicles and they expect safety standards to apply to them and this case, we didn’t see that.”

The debate over self-driving autos comes as federal regulators prepare to unveil regulations this summer for testing of fully automated cars — and they follow what is believed to be the first death in a car engaged in a semi-autonomous driving feature.

Federal regulators say preliminary reports show the fatal Tesla crash occurred when a semi-trailer turned left in front of the car that was in Autopilot mode at a Florida highway intersection on May 7. Florida police said the roof of the car struck the underside of the trailer and the car passed beneath. The driver, Joshua Brown, of Ohio, was dead at the scene.

“Neither Autopilot nor the driver noticed the white side of the tractor-trailer against a brightly lit sky, so the brake was not applied,” Tesla said in a blog posting on June 30.

The fatal crash is one of at least three high-profile accidents involving Tesla cars, including a July 1 accident involving Oakland County resident and art gallery owner Albert Scaglione in his 2016 Tesla Model X. Tesla has denied that Autopilot was on in Scaglione’s crash.

The other reported crash involving Autopilot occurred last month when the driver of a Tesla Model X SUV told local authorities the feature was active when the vehicle crashed into railing wires on a highway in Montana.

Ditlow’s Center for Auto Safety group has joined other highway safety advocates in deriding self-driving autos as “robot cars” whose safety benefits are being oversold by advocates of the autonomous vehicle technology. They cited the fatal Tesla crash as proof the technology is not ready for prime time.

The Consumers for Auto Reliability and Safety, Consumer Watchdog and Public Citizen groups wrote in a letter to National Highway Traffic Safety Administration chief Mark Rosekind that they are “dumbfounded that the fatal crash of a Tesla Model S in Florida that killed a former Navy SEAL did not give (Rosekind) pause, cause NHTSA to raise a warning flag, bring you to ask Tesla to adjust its software to require drivers’ hands on the wheel while in autopilot mode, or even to rename its ‘autopilot’ to ‘pilot assist’ until the crash investigation is complete.

“Instead, you doubled down on a plan to rush robot cars to the road, declaring that NHTSA cannot ‘stand idly by while we wait for the perfect’ and ‘no one incident will derail the Department of Transportation and NHTSA from its mission to improve safety on the roads by pursuing new lifesaving technologies,’ ” the consumer groups wrote to the highway safety chief in the letter, which is dated July 28.

In the speech the consumer groups were referencing, Rosekind said “no one incident will derail the Department of Transportation and NHTSA from its mission to improve safety on the roads by pursuing new lifesaving technologies.”

New-found awareness

The consumer groups said the recent crashes should give regulators pause about the readiness of the self-driving car technology itself, however.

“Tesla’s autopilot could not tell the difference between a white truck and a bright sky or between a big truck and a high-mounted road sign,” the groups wrote. “Technology with such an obvious flaw should never have been deployed, and should not remain on the road.”

Tesla has defended its Autopilot system, noting in the June 30 blog post that it “disables Autopilot by default and requires explicit acknowledgment that the system is new technology and still in a public beta phase before it can be enabled.”

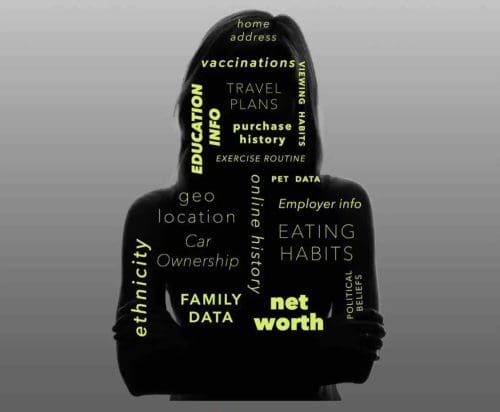

John Simpson, privacy project director at the Santa Monica-based Consumer Watchdog group, said in a telephone interview Monday, “It’s probably fair to say the Tesla crash has focused a lot of people’s attention on what’s going on. There’s an awareness that there may be technology that offers something, but we need to slow down. We shouldn’t be using the public as guinea pigs.”

He said safety advocates had been sounding the alarm about the rush to market self-driving autos long before the fatal Tesla crash occurred.

For evidence of safety advocates’ effectiveness, Simpson pointed to a recent decision by Mercedes-Benz to pull television commercials that describe its 2017 E-Class vehicles as nearly self-driving cars. The Consumers Union, Consumer Federation of America, Center for Auto Safety and former National Highway Traffic Safety Administration chief Joan Claybrook had complained the ads are “likely to mislead a reasonable consumer by representing the E-Class as self-driving when it is not.”

Two kinds of autonomous

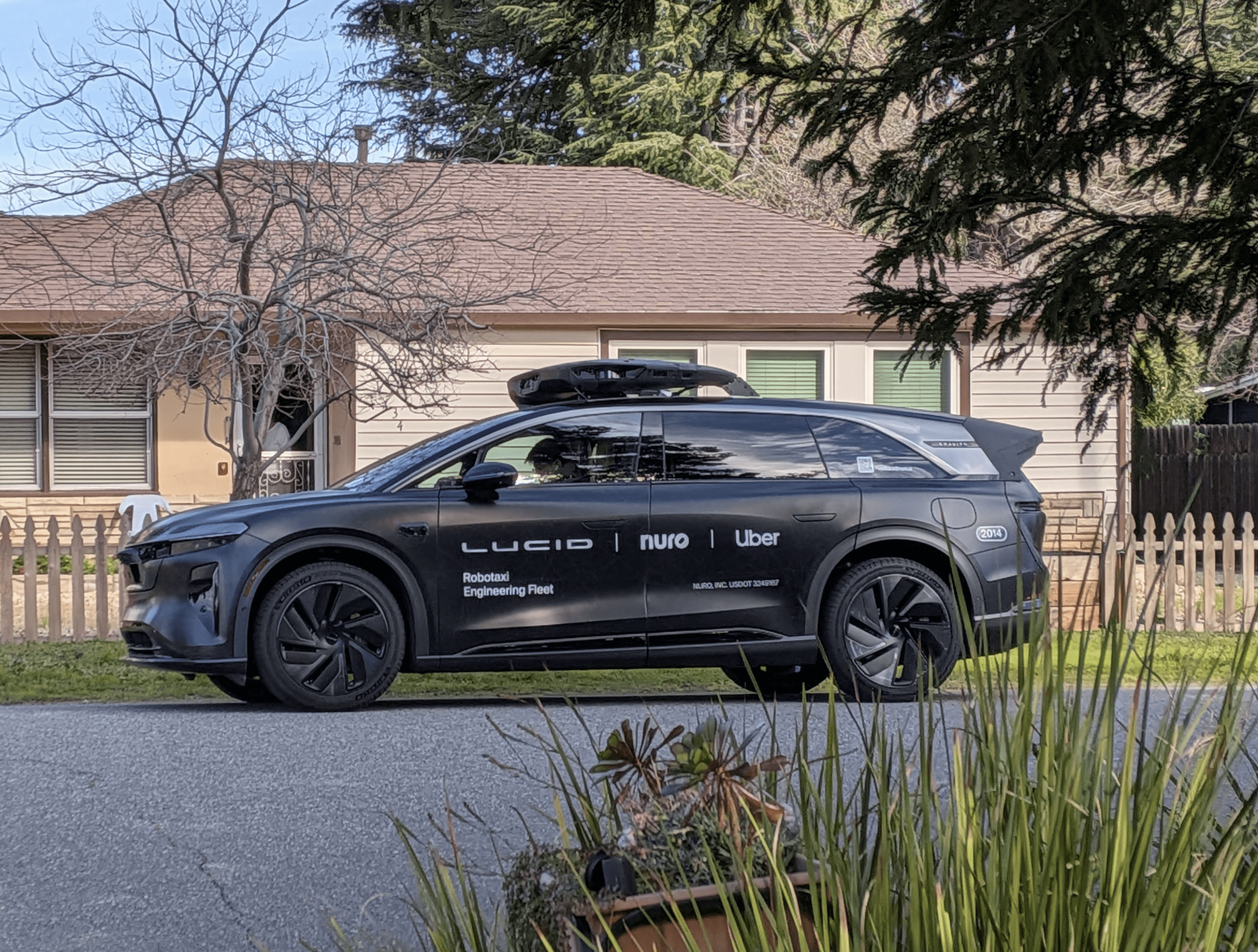

Self-driving car advocates have differentiated between fully autonomous vehicles like the models that are being tested by companies like Google and driver assistance features like Tesla’s Autopilot.

The Self-Driving Coalition for Safer Streets, which was formed to lobby for self-driving cars in Washington on behalf of Ford, Volvo, Google, Uber and Lyft, said it remains “dedicated to developing and testing fully autonomous vehicles in order to bring the promise of self-driving vehicles to roads and highways,” despite the escalating criticism from consumer groups.

“Our coalition was founded with the express purpose of increasing road safety and dramatically reducing the over 35,000 road fatalities that occur in the U.S. each year,” the group said in a statement provided Monday to The Detroit News.

Karl Brauer, senior director of insights for Kelley Blue Book, said safety advocates appear to have convinced regulators to at least consider crafting rules for driver-assistance features like Tesla’s Autopilot.

“I think NHTSA will now be feeling like they can’t just leave it up to the automakers with their own sense of liability,” Brauer said. “I think the fatality has elevated the awareness of government agencies and they’re going to step in on these kind of pre-autonomous driver assists.”

Ditlow, of the Center for Auto Safety, said safety advocates are not opposed to the idea of self-driving autos if they are tested rigorously before being deployed.

“Our point was not that we’re against self-driving autos, but you have to have a regulatory structure in place before they are placed on the road,” he said.

Twitter: @Keith_Laing